Artificial Intelligence Got Smart Faster Than Anyone Expected

Artificial intelligence got smart faster than anyone expected. It can write, reason, code, design, and diagnose. The intelligence problem – the one researchers spent decades worrying about – turned out to be more solvable than the world anticipated. | AI Trust Crisis

But a different problem has quietly taken its place. And it is more dangerous precisely because it is harder to see.

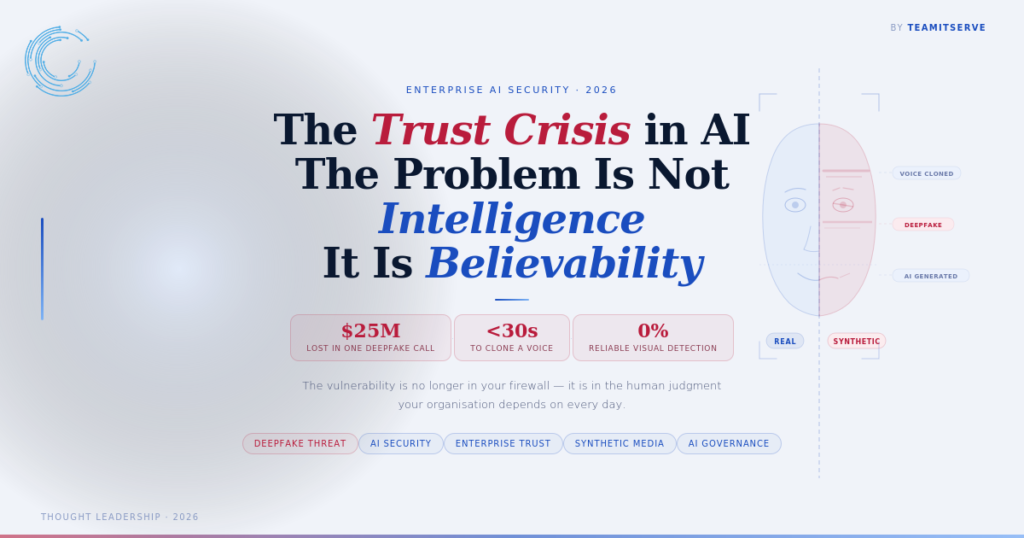

The problem is believability.

When Real and Fake Become Indistinguishable in AI

In early 2024, a finance employee at a multinational firm in Hong Kong joined a video call with who he believed was his chief financial officer and several colleagues. They instructed him to transfer funds. He complied. The amount was $25 million.

Every person on that call was a deepfake.

This was not a sophisticated state-sponsored attack. It was a fraud operation using tools that are now widely accessible. The employee did everything right by conventional security standards. He verified faces. He heard familiar voices. He saw people he recognised.

None of it was real.

The Scale of What Has Changed in AI-Generated Content

A year ago, detecting AI-generated content was still possible for a trained eye. Today it is not — not reliably, not at speed, and not at the volume enterprises operate at.

AI-generated emails now pass every spam filter built on linguistic pattern detection. AI voice cloning requires less than thirty seconds of source audio to produce a convincing replica. Video synthesis has crossed the threshold where compression artifacts – the last technical tell – are no longer a dependable signal.

The tools to do this are not locked behind government programmes or criminal syndicates. They are available, affordable, and increasingly automated.

Why This Is Now an Enterprise AI Security Problem

Security teams have spent years training employees to spot phishing emails with poor grammar and suspicious links. That training is now largely obsolete.

When the email reads perfectly, arrives from a spoofed but plausible address, references a real internal project, and is followed up by a voice message that sounds exactly like the CEO — the old detection framework does not hold.

The attack surface has shifted from systems to perception. The vulnerability is no longer in your firewall. It is in the human judgment your organisation depends on every day.

What Organisations Need to Do Differently About AI Trust

The answer is not to make employees more suspicious of everything. Chronic distrust destroys the speed and collaboration that organisations need to function.

The answer is architecture.

Verification that does not rely on identity alone

Voice and face are no longer sufficient proof. Enterprises need secondary confirmation layers – out-of-band verification for high-value transactions, cryptographic authentication for sensitive communications, and hard rules that no financial instruction above a defined threshold is actioned without a separate confirmed channel.

Detection tools integrated into workflow

AI-generated content detection is improving. Tools that flag synthetic media, analyse metadata, and score communication authenticity need to sit inside the tools employees already use – not in a separate system nobody opens.

Updated incident response for synthetic threats

Most breach playbooks were written for data exfiltration and ransomware. Very few account for the scenario where someone inside the organisation was socially engineered using a synthetic identity. That gap needs closing now.

The Deeper Shift in AI and Trust

The intelligence race in AI is largely won. Models will keep improving, but the gap between leading systems is narrowing. What is not narrowing is the gap between how fast synthetic content is evolving and how prepared organisations are to deal with it.

Trust was always the foundation of how businesses operate – with clients, with partners, with internal teams. AI did not create the trust problem. It industrialised it.

The organisations that treat believability as an infrastructure challenge – not a training exercise – are the ones that will stay ahead of it.