Most Companies Have AI Tools. Very Few Have an AI System

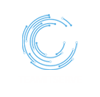

There is a difference — and it is widening fast. | AI system vs AI tools Walk into almost any enterprise today and you will find AI everywhere. A writing assistant here. A chatbot there. A forecasting model plugged into the BI dashboard. An AI-powered inbox, a summarization tool, a code helper. The list grows every quarter. And yet, despite all of it, the team is still chasing threads across five apps. The context still gets lost between handoffs. The left hand still does not know what the right hand is doing. More tools did not solve the coordination problem. In most cases, they deepened it. The Difference Between a Tool and a System A tool answers a question. A system closes a loop. When a sales rep uses an AI tool to draft a follow-up email, that is useful. But when an AI system detects that a deal has gone cold, pulls the account history from the CRM, drafts a contextual re-engagement message, routes it for approval, sends it, and logs the outcome — that is a different category of capability. The difference is not intelligence. It is architecture. Systems share context. They hand off between agents without losing state. They connect to your actual data — not a generic model trained on the public internet. They know what happened last week because they were there for it. Tools do not remember. Systems do. Why Fragmentation Is the Real Problem in 2026 The enterprises that are pulling ahead this year did not win by adopting more AI. They won by being intentional about how their AI works together. A company running fifteen disconnected AI tools still has fifteen disconnected workflows. The overhead of managing them — different vendors, different data access, different outputs to reconcile — often costs more than the tools save. One mid-market financial services firm consolidated four separate AI tools into a single agent system with shared data access and a unified workflow layer. Response time on client queries dropped by 60 percent. Not because the AI got smarter. Because it finally had the context it needed to act. What Intentional AI Architecture Looks Like The organizations getting this right are building with three things in mind. Clear ownership. Every agent in the system has a defined scope — what it can access, what it can act on, and when it hands off. Ambiguity at the architecture level becomes chaos at the execution level. Connected data. The system is only as useful as the information it can reach. Siloed data produces siloed outputs, regardless of how capable the underlying model is. Governance that scales. As the system grows, so does its footprint in your business. Audit trails, access controls, and human review checkpoints are not optional features — they are the foundation. The Question Worth Asking Most AI conversations inside organizations start with “What tools are we using?” The better question is: “Does our AI work together?” If the answer is no — or even “sort of” — the gap between your organization and the ones building unified systems is growing every month. Adding another tool will not close it. TeamITServe helps enterprises move from scattered AI tools to unified systems — from discovery to production. If your AI is not working together yet, that is where we start.

Most Companies Have AI Tools. Very Few Have an AI System Read More »